[WITH CODE] Data transformations: Data shape and predictive features

Alternative data shape transformation

Table of contents:

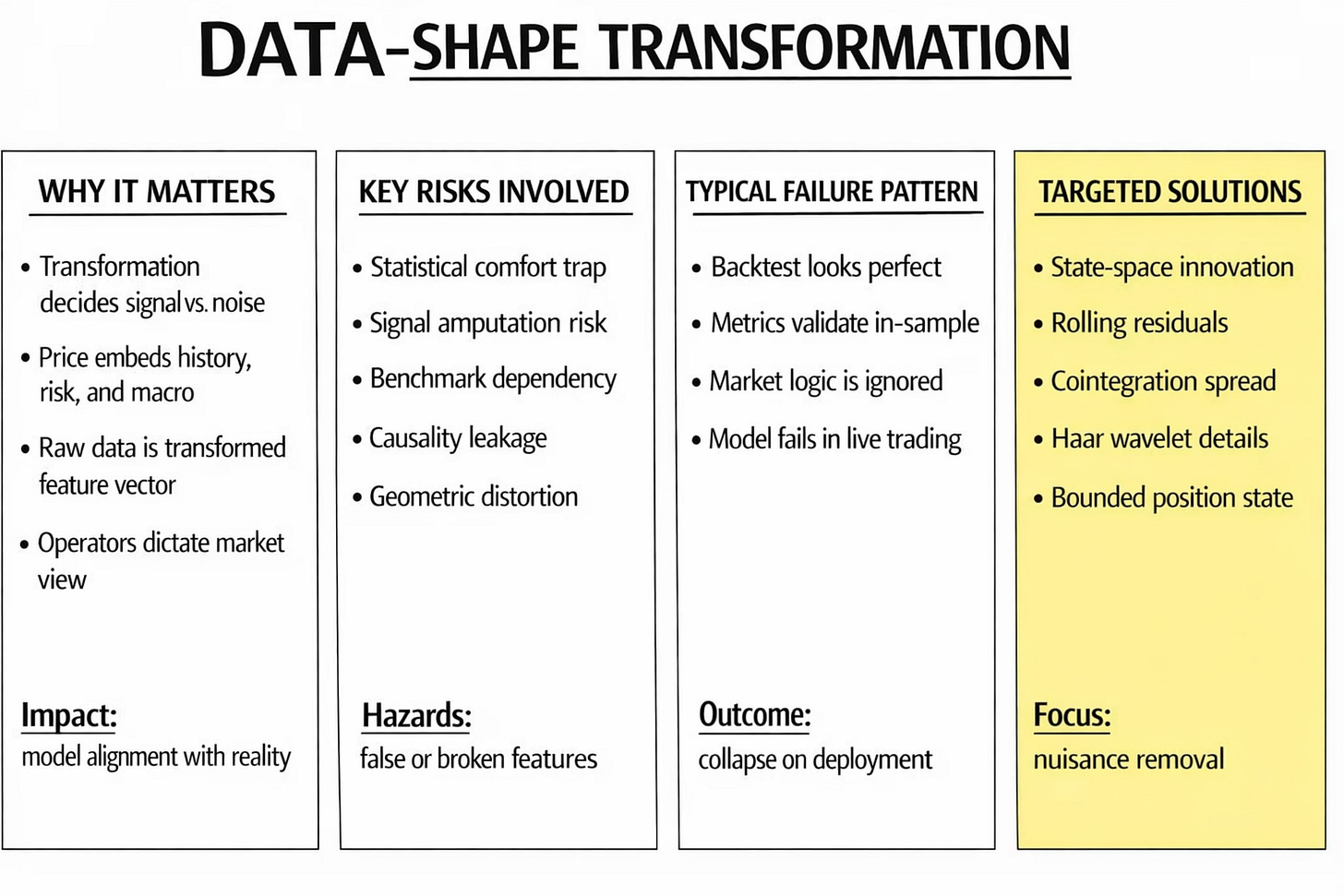

Introduction.

Data-Shape transformation.

Identifying the risks and limitations.

Data shape transformations for predictive features.

State-space approach.

Dynamic benchmark neutralization.

Stochastic trend removal via cointegration.

Multiscale isolation approach.

Bounded state encoding.

Before you begin, remember that you have an index with the newsletter content organized by clicking on “Read the newsletter index” in this image.

Introduction

Imagine that a team downloads a price series, defines a target, applies a transformation, and moves on to signal design, model fitting, validation, and execution. That sequence looks efficient. However, the transformation of the data is is the first act of model construction.

That is why data-shape transformation sits at the true front line of feature engineering. The problem is whether the operator used to create that series preserves the economic structure that generates predictive edge. A feature can look impeccable under statistical diagnostics and still be useless in live trading. It can pass every formal test of stability while removing the component that contained the alpha.

This article examines that hidden fault line. It argues that data-shape transformation must be treated as a structural modeling choice rather than a preprocessing convenience. We will identify the main risks introduced by transformation operators, explain how they distort the topology of predictive information, and then study five targeted transformations designed for distinct market nuisances: state-space innovations, rolling benchmark residuals, cointegration spreads, multiscale Haar details, and rolling range positions. The objective is not to make features look cleaner. The objective is to ensure that the shape of the data remains faithful to the market mechanism the model is supposed to learn.

Every transformation enforces a decision about relevance. It selects one part of the market path and suppresses another. It may emphasize short-horizon variation, eliminate common drift, compress local extremes, or redefine the position of the current price relative to its recent history. Before any model is trained, the researcher has already imposed a theory of what matters.

By the way, you can go deeper in this topic by exploring this paper.

That choice is decisive because financial data are layered. A single price series contains slow structural movement, temporary dislocations, benchmark comovement, volatility clustering, and random noise at the same time. A predictive model is never interested in all these layers equally. It depends on a narrow component that matches the strategy horizon and economic logic. The transformation either isolates that component or removes it.

Data-Shape transformation

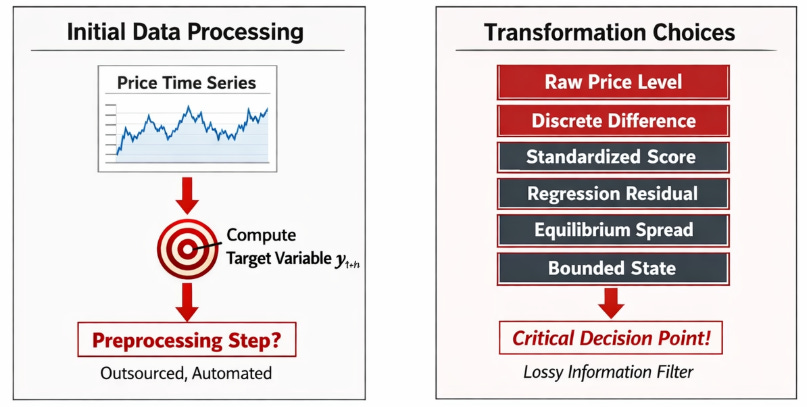

The starting phase of feature engineering contains a severe trap because it masquerades as a trivial data processing step. A quant team downloads a time series of prices, computes a target variable, and faces the decision of whether the predictive model should ingest the raw level, a discrete difference, a standardized statistical score, a regression residual, an equilibrium spread, or a bounded state variable. The standard academic and industry reflex is to treat this decision as a technical prerequisite, often labeled preprocessing or data normalization. That classification is a mistake.

In many pipelines, this step is outsourced to data engineers or automated via standard library defaults before the alpha researchers even begin their core modeling. This assumes that data transformation is a lossless translation of information. It is not. Once a specific transformation is applied to the raw time series, the researcher has declared what specific variation in the market path constitutes actionable information and what variation constitutes noise. If the chosen transformation is misaligned with the economic mechanism the strategy intends to exploit, the resulting model can exhibit perfect statistical behavior while remaining economically blind.

In our domain, this situation is unforgiving because the price is a cumulative, high-memory object. It aggregates historical microstructure shocks, macroeconomic regime shifts, discrete splits in volatility, slow reallocations of institutional capital, structural risk premia, and continuous benchmark movements.

A raw price level carries a massive amount of historical baggage that the short-term or medium-term target variable is invariant to. Assume the core research problem is to predict the magnitude and direction of a short-horizon log return yt+h. The actual feature matrix the learning algorithm receives is rarely the raw price pt. Instead, the model ingests a transformed object xt = T(Ft), where Ft represents the filtration generated by the history of prices and exogenous covariates up to time t. The selection of the transformation operator T is the actual front line of the research design. This operator dictates whether the vector xt represents a local surprise, a relative displacement, a structurally bounded state, etc.

Identifying the risks and limitations

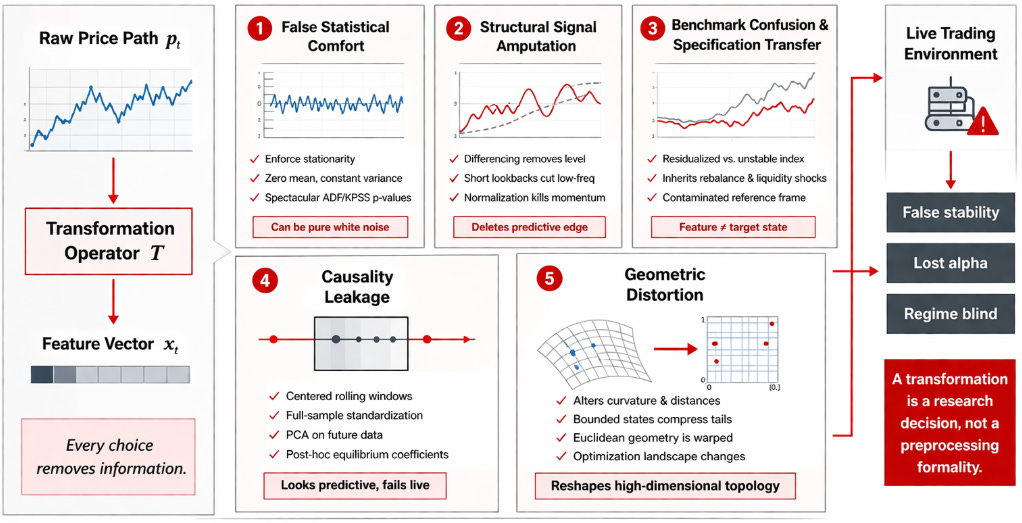

The risk surface introduced by the operator T is broader and more destructive than standard statistical literature admits. Researchers frequently inherit standard transformation recipes without interrogating their geometric implications or their effect on the data topology. They compute discrete log returns rt = \ln(pt) - \ln(pt-1) because financial libraries expect stationary inputs. They build rolling z-scores because the resulting chart oscillates symmetrically around zero and fits neatly into the activation functions of deep neural networks. These choices impose heavy structural assumptions. A standard return calculation deletes the absolute price level and the cumulative memory of the path. A residual calculation deletes the variance explained by the specified reference model.

The first specific risk is false statistical comfort. A transformed time series can be manipulated to exhibit perfect weak-sense stationarity, zero mean, and constant variance, while simultaneously failing to express any tradable market state. Any researcher can run an Augmented Dickey-Fuller or KPSS test, achieve a spectacular p-value, and incorrectly assume the feature is ready for predictive modeling. Extracting white noise from a time series guarantees stability, but white noise contains zero predictive alpha. The researcher has converted the price into a stable sequence that is devoid of economic value.

The second risk is structural signal “amputation”. A transformation can neutralize the exact slow-moving component that carried the predictive edge. This failure mode occurs when researchers impose rigid discrete differencing, rolling normalization, or arbitrary short lookback windows without first establishing the exact holding period the systematic strategy is designed to exploit. If a macro trend-following strategy relies on three-month momentum, applying a 5-day rolling z-score to the input features will delete the low-frequency eigenvalue that the model requires to identify the trend.

The third risk is benchmark confusion and specification transfer. When a transformation operator incorporates a secondary series—such as a benchmark ETF, a sector index, or a paired asset—the resulting feature inherits the specification risk of that external asset. A regression residual is only valid if the variable against which it is residualized represents a stable economic factor. If a researcher isolates a tech stock by residualizing it against the QQQ index, and the index undergoes a massive constituent rebalance or suffers a liquidity shock, the resulting feature vector will exhibit severe volatility that has nothing to do with the target stock’s idiosyncratic state. The feature becomes contaminated by the reference frame.

The fourth risk is causality leakage. This is the most common fatal error in systematic trading. When the transformation relies on parameters estimated using information outside the filtration Ft, the feature becomes predictive in the research environment and false in the production environment. Using centered rolling windows, applying Principal Component Analysis eigenvectors derived from the full target matrix, computing full-sample mean vectors for standardization, or extracting post-hoc equilibrium coefficients creates a matrix that cannot exist in live trading. Forward-looking bias is adept at hiding within the scaling constants of data-shape transformations.

The fifth risk is geometric distortion. This transformation alters local curvature, variance persistence, variance clustering, and the relative distance metric of excursions. Consider a neural network optimizer relying on Euclidean distance to compute gradients. Mapping a log-normal price path into a bounded state space between zero and one forces the distance metric near the boundaries to behave differently from the distance metric in the center of the distribution. A raw price move that represents a massive four-standard-deviation tail event might only translate to a 0.01 shift in the bounded feature space if the state was already sitting at 0.98. Extracting a state space model collapses a continuous smooth drift into a localized sequence of forecast errors clustered around zero. This alters the fundamental definition of what a market burst represents to the downstream algorithm.

The conflict between raw data and transformed geometry culminates in a specific, observable failure in the research lifecycle. A model is built on a transformed feature set. The initial teardown looks immaculate. The in-sample backtest “demonstrates” it works. The cross-validation metrics are stable across distinct market regimes. The feature importance vectors are dense and logical. The Sharpe ratio appears robust across multiple chronological subsamples, and the drawdowns are well contained.

Then… the model is advanced to paper trading or live execution, and the predictive relationship collapses. The alpha decay instantaneous. The post-mortem analysis reveals that the algorithm never learned the underlying market mechanism. The optimization process learned the geometry introduced by the transformation operator T. It fit itself to sample-specific trend removal artifacts, spurious benchmark dependencies, bound-rejection behavior dictated by rolling window mechanics rather than market participants, and local scaling normalization patterns that possessed no forward-looking stability.

Data shape transformations for predictive features

If the strategy is engineered to trade benchmark-relative mispricing, then the absolute market benchmark exposure is the primary nuisance structure. If the strategy capitalizes on statistical arbitrage and pair reversion, the common stochastic trend is the primary nuisance structure. If the strategy dictates short-horizon momentum reactions, slow multi-month macroeconomic drift is the primary nuisance structure. If the strategy operates as a local-state mean-reversion classifier, the absolute nominal price level is the primary nuisance structure.

This perspective enforces a precise operational taxonomy of data-shape transformations. The state space algorithm eliminates predictable local trends governed by state-space transition matrices, leaving the prediction surprise. The rolling_regression_residual algorithm eliminates time-varying benchmark exposure, yielding a relative-value deviation vector. The cointegration_spread algorithm eliminates common stochastic drift based on equilibrium vector autoregression logic, resulting in a stationary spread conducive to error-correction modeling. The haar_detail_same_length algorithm eliminates low-frequency power at a selected scale, leaving a targeted multiscale shock component. The rolling_range_position algorithm eliminates absolute nominal levels and local variance scaling, yielding a bounded coordinate variable.

These five methods represent interventions designed to blind the model to specific, targeted market nuisances. However without the right measures, the same mistakes could continue in some of them. Now that you’re aware of them, we can move forward.

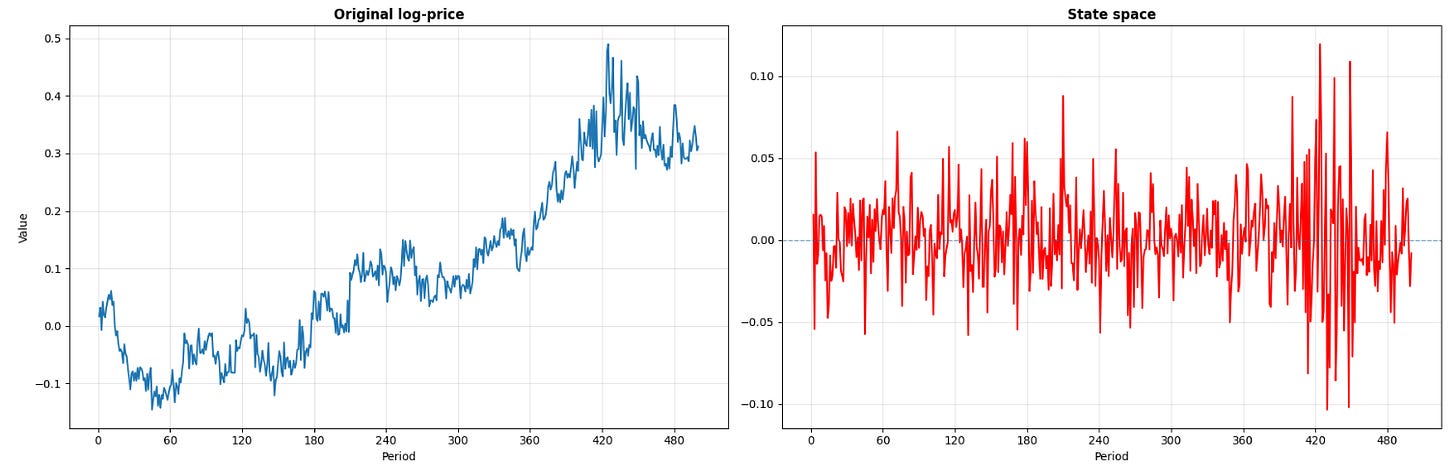

State-space approach

The first targeted transformation is the state-space. In technical literature, this is defined as the one-step-ahead prediction error derived from a local linear trend state-space model. This transformation alters the time-series geometry by removing the exact variation that the internal state estimate already expects the system to produce at time t. This isolation mechanism is required when the raw log-price is cumulative, exhibiting a smooth drift that masks the actual high-frequency surprise component necessary for short-horizon prediction.

The formulation relies on setting up the observed log-price yt as the output of a local linear trend model. The system requires an observation equation and state transition equations:

Here, the unobserved latent state consists of the level μt and the slope βt. The disturbances εt, ηt, and ζt are mutually independent Gaussian white noise processes governing the measurement error, the level shift, and the slope shift. The state space model operates recursively. Given the filtration up to t-1, it produces the optimal linear one-step-ahead prediction E[yt | Ft-1]. The feature we extract is the sequence vt:

It is crucial to understand that vt represents the precise segment of the current price movement that could not be predicted by the prior dynamic state. In the context of algorithmic trading, this means the feature measures orthogonal surprise rather than raw directional motion.

The rationale for this transformation is embedded in market microstructure. A high-frequency algorithm processing order flow must differentiate between a market that ticks upward at a steady, anticipated velocity and a market that prints an identical upward tick against a declining latent trend. The nominal difference yt -yt-1 will record an identical positive value in both scenarios. The state space vt will output a minor value for the first scenario and a massive positive value for the second. Innovation-based feature engineering forces the learning algorithm to respect the conditional context of the regime.

def state_space(price_series: pd.Series) -> pd.Series:

"""

Extracts the one-step-ahead forecast error from a local linear trend

state-space model.

"""

lp = price_series.rename("log_price")

model = UnobservedComponents(lp, level="local linear trend")

result = model.fit(disp=False)

space = pd.Series(

result.filter_results.forecasts_error[0],

index=lp.index,

name="state_space",)

space.iloc[0] = np.nan

return spaceHowever, the researcher must decide if local drift should be neutralized. For execution timing, short-term liquidity provision, and algorithmic burst-detection, neutralizing drift is necessary. Utilizing vt ensures the resulting feature matrix is robust across shifting volatility regimes because the state-space covariance matrices adapt to changing latent slopes. The downstream classifier is thus relieved of the computational burden of discovering that adaptation matrix from raw inputs.

The primary failure mode of this transformation is specification error, which occurs when the assumed dynamics of the state-space model fail to map onto the conditions of the asset’s microstructure. The standard local linear trend model relies on continuous Gaussian diffusion. If the true data generation process is instead dominated by discrete Poisson jumps—such as overnight gaps, scheduled FOMC macroeconomic releases, or sudden liquidity vacuums—the linear Gaussian assumptions break down. When a structural price jump occurs, the linear filter cannot snap to the new regime. Instead, it lags, requiring multiple timesteps for the state space gain matrix to fully correct the latent state components. During this structural catch-up phase, the sequence vt will output an artificial cluster of large values. These values no longer represent an orthogonal market surprises but a deterministic filter correction error.

Researchers are tempted by the aesthetic stability of the a posteriori residual, often extracted via two-sided smoothing algorithms provided by default in many statistical libraries. Because these smoothers utilize future price information from t+1 to T to optimize the state estimate at time t, utilizing them injects terminal forward-looking leakage into the feature matrix. The resulting in-sample backtest will generate flawless, profitable metrics, while the live production system will bleed money.

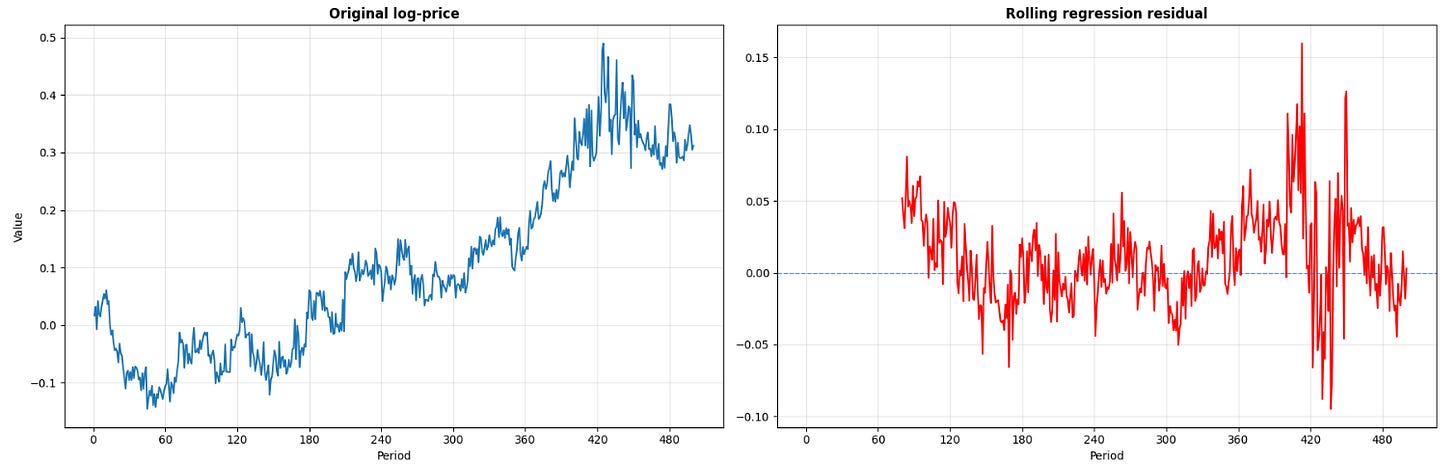

Dynamic benchmark neutralization

The second transformation points to a different geometric annoyance known as time-varying external beta. The label is the rolling Ordinary Least Squares residual. In systematic portfolio management, this is recognized as the dynamic hedge residual. The core logic dictates that the variance of the target asset yt is contaminated by the variance of an external factor xt, which could represent a sector ETF, a broad index future, or a principal component basket. To model the asset’s idiosyncratic state, the researcher must regress the asset against the reference factor and isolate the residual error.

The model specifies:

Because the relationship between financial assets is never static, αt and βt cannot be estimated over the full sample. They must be approximated over a rolling window of length w. The parameter vector is computed utilizing the local design matrix Xt-w+1:t. The resulting transformed feature is the out-of-sample or end-of-sample deviation:

This specific subtraction is geometrically distinct from subtracting simple returns or computing a ratio. The dynamic residual projects the asset into the null space of the benchmark over the specified lookback window. The output geometry abandons the absolute coordinate system and adopts a relative, benchmark-neutral coordinate system.

def rolling_regression_residual(

price_series: pd.Series,

benchmark_series: pd.Series,

window: int = 80,) -> pd.Series:

"""

Computes the dynamic rolling residual of the asset relative to the benchmark.

"""

y = price_series.rename("log_price")

x = benchmark_series.rename("log_benchmark")

X = sm.add_constant(x)

result = RollingOLS(y, X, window=window).fit()

params = result.params

fitted = params["const"] + params["log_benchmark"] * x

residual = (y - fitted).rename(f"rolling_regression_residual_{window}")

return residualIn algorithmic trading, raw price vectors deceive predictive models by presenting sector-wide beta expansion as idiosyncratic momentum. A single equity instrument may display severe overbought technical geometry when the underlying condition is uniform sector strength. A cryptocurrency token may exhibit severe downward drift when the entire beta complex is repricing. Feeding raw prices into a deep neural network forces the network to allocate hidden layers to the task of inferring the benchmark matrix and computing the subtraction. By passing ût, the transformation executes the subtraction analytically, presenting the network with the pure, actionable relative-value deviation.

The geometric shift transforms a trend-dominated price path into a sequence that continually crosses zero, widening when the beta relationship degrades and compressing when the hedge is tight. This sequence describes relative-value dislocations that are primed for statistical arbitrage.

The implementation contains a critical stability trade-off governed by the window parameter w. A minimal window length allows the matrix inversion (XTX)-1 to adapt to microstructural shifts in beta, but exposes the parameters to severe estimation noise and matrix ill-conditioning. A maximum window length stabilizes the eigenvalue structure of the covariance matrix but renders the hedge ratio unresponsive to macroeconomic events.

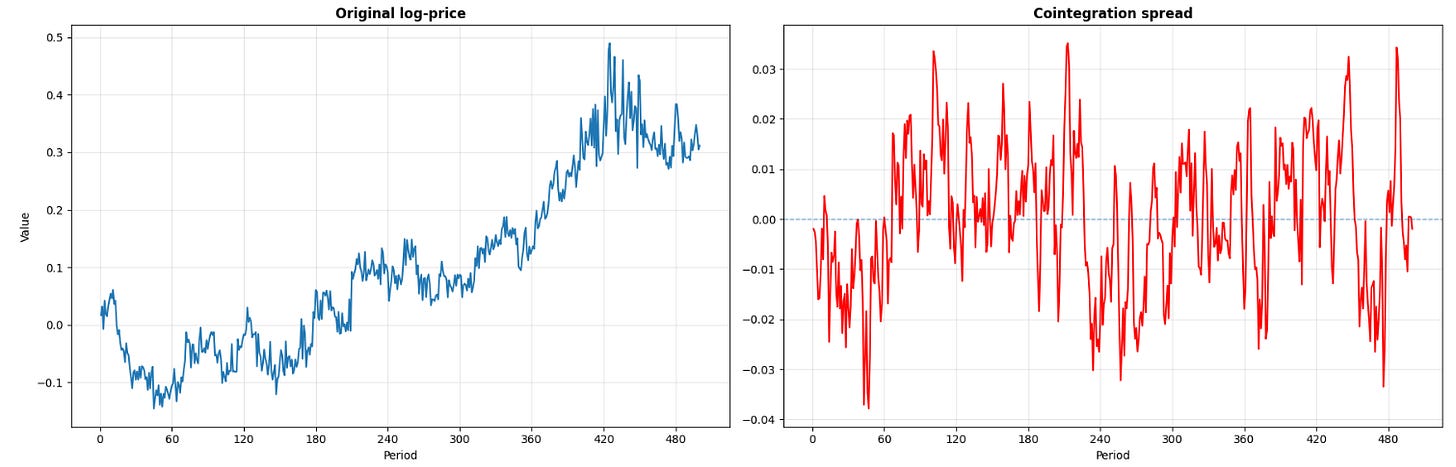

Stochastic trend removal via cointegration

The third data-shape transformation addresses the phenomenon of common stochastic trends. Referred to as the Engle-Granger residual or the cointegration spread, this method supersedes local benchmark neutralization by enforcing an equilibrium hypothesis. It relies on the proof that while two distinct log-price series yt and xt may both possess unit roots, a specific linear combination of the two series may be integrated of order 0, yielding a stationary process.

If there exists a parameter vector [α, β] such that the linear combination:

is a stationary process, the variables are defined as cointegrated, and st represents the equilibrium spread. The Engle-Granger two-step procedure first estimates the scalar β via ordinary least squares on a causally valid training set, and applies an Augmented Dickey-Fuller test to the residual sequence st to reject the null hypothesis of a unit root.

The concept of the cointegration spread over a simple rolling OLS residual lies in its equilibrium framework and the physical market mechanics that enforce it. A dynamic rolling residual states that a local, temporary variance explanation exists. It makes no claims about the terminal behavior of the asset pair. A cointegration differential spread that the components of the two assets are structurally linked to each other by an exogenous economic force. This force could be identical underlying cash flows (in the case of dual-listed equities), arbitrage boundaries enforced by Exchange Traded Fund creation and redemption mechanisms, or capitalized statistical arbitrage desks trading the structural mean. The raw log-prices are permitted to drift into uncharted coordinate space, but the distance metric between them, st, is constrained to revert to its historical mean.

The standard Error Correction Model representation models the short-term dynamics of the system as a direct response to the current magnitude of the equilibrium deviation, while controlling for localized high-frequency shocks:

In this structural equation, λ represents the speed-of-adjustment coefficient, and it is the most critical parameter in the relative-value strategy. The predictive alpha is not contained in the absolute nominal level of yt or xt. The actionable alpha is isolated within st-1, the exact geometric distance from equilibrium. By executing this transformation, the researcher compresses the shared macroeconomic drift and aligns the input feature with the logic of convergence.

The magnitude of λ dictates the interactions of the trading book. A high absolute value of λ implies rapid mean reversion. This creates a high-turnover strategy that requires minimal capital lockup but demands extreme execution efficiency, as the profit margin per trade is small and sensitive to latency. Conversely, a low absolute value of λ implies slow, structural reversion. This dictates a low-turnover, capacity-rich strategy that suffers from capital lockup and extended periods of mark-to-market drawdown when the spread temporarily widens beyond historical norms.

def cointegration_spread(

price_series: pd.Series,

partner_series: pd.Series,) -> pd.Series:

"""

Estimates the Engle-Granger equilibrium spread.

"""

y = price_series.rename("log_price")

x = partner_series.rename("log_partner")

X = sm.add_constant(x)

fit = sm.OLS(y, X, missing="drop").fit()

spread = (y - fit.predict(X)).rename("cointegration_spread")

return spreadTransforming non-stationary prices into a stationary spread state variable provides relief to machine learning algorithms. If a random forest or a gradient boosting machine is fed two raw, drifting I(1) sequences, it will attempt to construct arbitrary, overfit split points in coordinate spaces that the asset has never historically visited. When the asset prices drift beyond the maximum values observed in the training set, the tree algorithm can only output a constant terminal leaf value, breaking the model. Similarly, feeding unbounded I(1) sequences into deep neural networks causes severe gradient instability and forces activation functions into extreme saturation zones. By feeding the optimization algorithm the bounded sequence st, the algorithm is restricted to evaluating the magnitude and velocity of the equilibrium error. The model focuses its entire computational capacity on timing the reversion and sizing the risk optimally, rather than wasting matrix operations attempting to subtract non-stationary trends.

Remember that correlation does not imply cointegration. Two assets can exhibit a Pearson correlation coefficient of 0.95 over a multi-year horizon while failing unit root tests on their residuals. Trading this spurious pattern leads to the classic relative-value failure where the spread widens, the model increases the position size assuming mean reversion, and the error variance expands until you face a margin call.

Furthermore, a statistically verified stationary spread is not guaranteed to be economically tradable. The theoretical spread st ignores market friction. If the half-life of mean reversion exceeds the margin constraints of the portfolio, the position will be forcibly liquidated before the equilibrium error corrects. Additionally, if the borrow costs on the short leg of the spread exceed the expected mathematical yield of the reversion, the statistical feature is invalid in production.

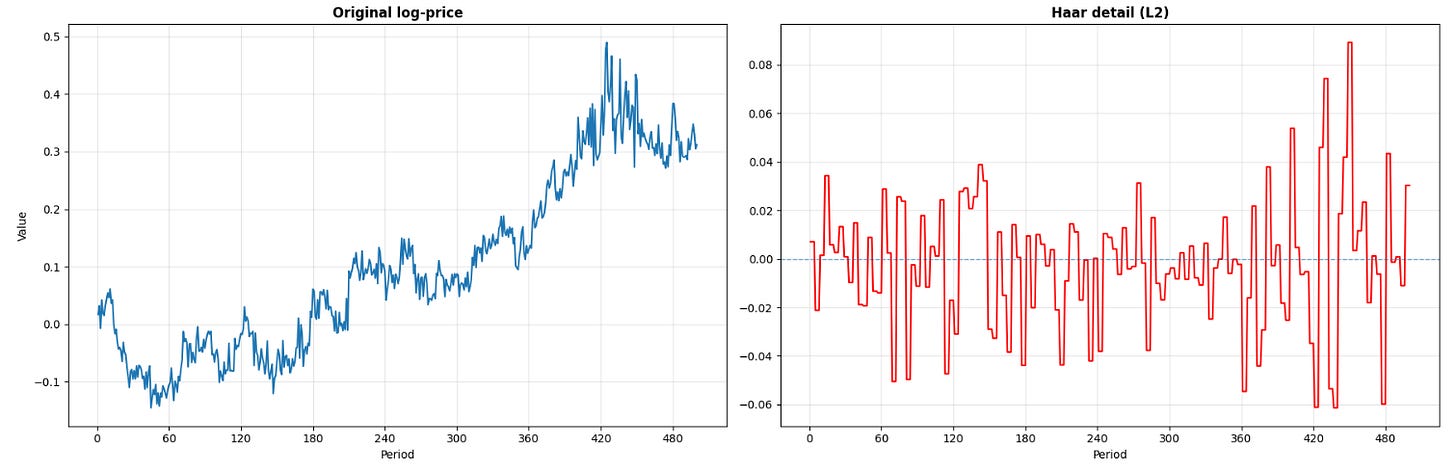

Multiscale isolation approach

The fourth transformation steps away from reference benchmarks and equilibrium partners to address internal scale contamination. The algorithm extracts the Haar wavelet detail coefficient. In signal processing, this operator isolates a multiscale high-frequency component while decimating low-frequency drift. This shape transformation is deployed when the researcher has established that the predictive alpha resides within a very specific time scale, and is masked by the simultaneous presence of microstructural noise at lower scales and macro-level cumulative drift at higher scales.

Financial order books process an amalgamation of participants operating at different frequencies. Tick-by-tick is dominated by market makers canceling and replacing quotes. Intraday minutes to hours is dominated by algorithmic execution engines slicing large institutional blocks via VWAP algorithms. Days to weeks is dominated by fundamental portfolio reallocation. A standard differencing operator (calculating a basic log return) compresses all of these distinct horizons into an identical, localized logic. It forces the learning algorithm to separate these overlapping frequency bands using raw function approximation.

The discrete Haar wavelet transform operates as an orthogonal filter bank designed to decouple these scales. It applies a low-pass filter to compute local averages, pushing the slow trend information into a lower-frequency approximation space. Simultaneously, it applies a high-pass filter to compute local differences, capturing the exact detail variation occurring uniquely at that specific scale. For adjacent discrete observations a and b, the fundamental operations are:

Applied recursively in a cascaded architecture, the algorithm generates multiscale approximation coefficients and detail coefficients. A detail coefficient extracted at Level 2 represents the difference of the local averages computed at Level 1. This distinction allows to target a specific frequency band—a precise temporal horizon—rather than defaulting to the single-step increment forced by standard return calculations.

The selection of the Haar wavelet over continuous alternatives (such as the Morlet or Daubechies wavelets) is intentional in financial time series analysis. Continuous wavelets are optimized for smooth signals like acoustics or electromagnetics. Market prices are noisy. The Haar basis function is defined as a step function. It matches the market dislocation, preserving the exact magnitude of the discrete jump without introducing the artificial ringing artifacts or smoothed boundaries common in continuous wavelet convolutions.

To utilize this transformed output in a predictive feature matrix alongside standard, daily-sampled covariates, the implementation requires a length-preserving operation. The standard decimated wavelet transform halves the array length at each level. To align the sequence with the original time index t, the system must upsample the coefficients. In live trading systems, this upsampling must be causal. Utilizing standard linear interpolation imports future values into the current state. The correct engineering solution relies on zero-order hold upsampling, repeating the most recent coefficient forward to prevent any look-ahead bias.

The resulting geometric intervention transforms a drifting, multi-layered log-price path into a sequence clustered around zero, where the amplitude of the oscillations represents the magnitude of the targeted scale-specific burst. The lower-frequency trend components are annihilated.

def haar_detail_same_length(price_series: pd.Series, level: int = 2) -> pd.Series:

"""

Extracts the multiscale high-frequency detail coefficient using a discrete

Haar wavelet filter bank and returns it with the same length as the input.

"""

x = np.asarray(price_series, dtype=float)

n = len(x)

coeffs = x.copy()

current_len = n

detail = None

block = 1

for _ in range(level):

if current_len < 2:

break

used_len = current_len if current_len % 2 == 0 else current_len - 1

current = coeffs[:used_len]

avg = (current[0:used_len:2] + current[1:used_len:2]) / np.sqrt(2)

det = (current[0:used_len:2] - current[1:used_len:2]) / np.sqrt(2)

coeffs[: len(avg)] = avg

current_len = len(avg)

detail = det

block *= 2

if detail is None:

return pd.Series(

np.nan,

index=price_series.index,

name=f"haar_detail_L{level}",)

upsampled = np.repeat(detail, block)

if len(upsampled) < n:

upsampled = np.r_[upsampled, np.repeat(upsampled[-1], n - len(upsampled))]

return pd.Series(

upsampled[:n],

index=price_series.index,

name=f"haar_detail_L{level}",)This transformation is critical for horizon-specific execution logic. Consider a temporary order book imbalance generated by digesting a scheduled macroeconomic data release versus the slow absorption of a multi-day block trade. The macro release generates a visible, high-amplitude signature. However, this precise signature remains undetectable within the raw log-price sequence due to the overwhelming magnitude of the concurrent multi-month macro trend drift. By passing the multiscale detail coefficient Dt(j) to the predictive model, the signal-to-noise ratio is maximized at the exact frequency the strategy is designed to hold the position. The optimization algorithm can recognize the structural footprint of the transient shock.

The limitations of wavelet transformations revolve around boundary conditions, asynchronous sampling, and noise. When applied to raw tick data characterized by bid-ask bounce and irregular timestamps, fine-scale detail coefficients will isolate and amplify the microstructural noise rather than the intended economic event. Wavelet transforms assume a uniform sampling grid, violating this assumption distorts the frequency targeting.

Furthermore, handling the right-hand boundary (the actual live time t) is hazardous. Wavelet filters require data points that straddle the center point of the convolution. At the live edge of the time series, the filter lacks the future data points necessary to complete the calculation. Utilizing symmetric boundary extension—a common default in open-source Python libraries—folds the array back on itself, utilizing data points from t-k as proxies for t+k. While elegant, this corrupts the state variable at the exact moment the trading system requires it. Researchers must enforce causal padding or utilize specialized boundary wavelets to ensure the feature matrix remains uncontaminated.

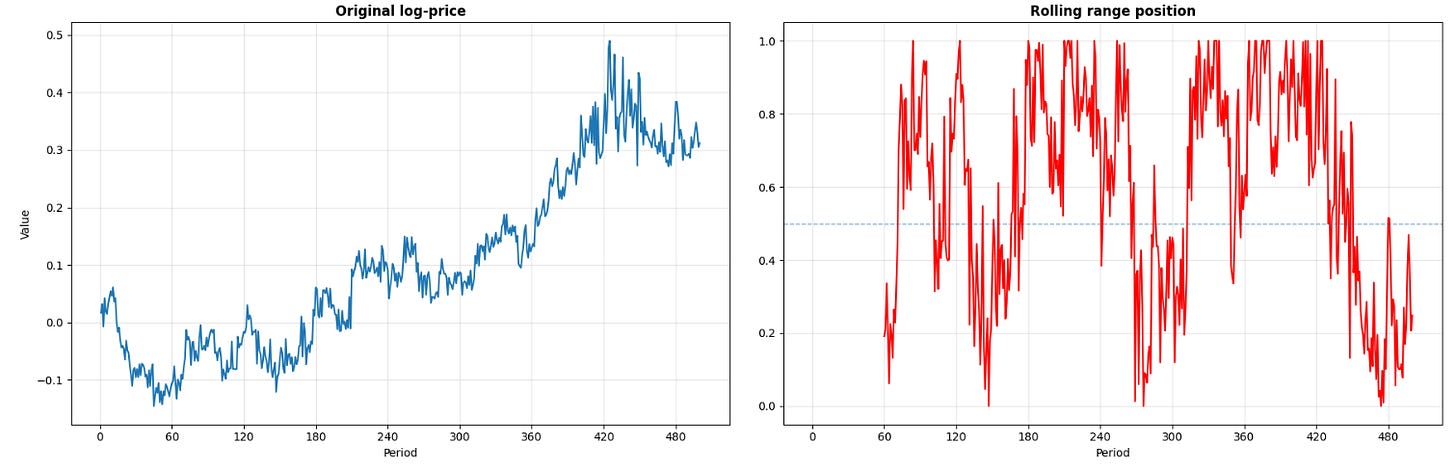

Bounded state encoding

The fifth and final transformation algorithm in this framework is the rolling range position. While computationally less complex than state-space estimators or orthogonal wavelets, it introduces non-linear geometric modifications. In fact, it converts a non-stationary price path into a bounded state occupancy variable.

Let P(1),t denote the minimum order statistic and P(n),t denote the maximum order statistic of the price sequence over a causal trailing window of length w. The rolling range position rt is defined as:

Assuming the local variance is positive, the output space of rt is bounded within the closed interval [0, 1]. (The addition of a microscopic constant ε in the denominator is a production requirement to prevent zero-division errors during market halts, extended limit-up/limit-down states, or extreme microstructural illiquidity where the local maximum exactly equals the local minimum).

A feature vector evaluating to 0.05 indicates that the current market price is compressed against its recent lower extreme. A value of 0.98 indicates extreme upper-bound occupancy. This transformation kills the nominal price leveles by using the local variance.

Breakout momentum models, dynamic stop-loss placement, and urgency-based execution routing evaluate expected return based on whether the local boundary conditions are being tested or rejected. Furthermore, bounded interval features are immune to cross-sectional scaling issues. If a portfolio must evaluate a penny stock trading at 1.50 and a mega-cap equity trading at 3,500, passing the raw levels or even standard deviations will warp the loss function. The variable rt normalizes the topological space, making the states comparable across disparate asset classes.

def rolling_range_position(series: pd.Series, window: int = 60) -> pd.Series:

"""

Maps the series into [0, 1] based on the rolling min-max range.

"""

lo = series.rolling(window).min()

hi = series.rolling(window).max()

pos = (series - lo) / (hi - lo).replace(0, np.nan)

return pos.rename(f"range_position_{window}")The interaction between bounded variables and tree-based learning algorithms (Random Forests, Gradient Boosted Trees) is pretty interesting. These algorithms show great performance when provided with bounded state features. Trees partition coordinate space via orthogonal hyperplanes. If the raw price continues to trend upwards into uncharted numerical area, the tree algorithm can only output the constant value of its terminal leaf node. By mapping the price into rt, the price drift is recursively folded back into the [0, 1] interval, allowing the decision tree to apply its historically learned logic regarding bound-rejection.

The danger of the rolling range position lies in the non-differentiability at the boundaries and the assumption of local support. The trailing window w enforces a boundary that the market does not acknowledge. The range is an artifact of local sample memory. If w is excessively short, standard Brownian noise will trigger extreme readings of 0 or 1, causing the classifier to generate false breakout signals. If w is excessively long, the range minimum and maximum become anchored to stale macro-regimes, rendering the current fluctuations invisible as the variable hovers near 0.5. The parameter w must be mapped to the temporal memory of the specific market participants the strategy is attempting to exploit.

Okay! Great job today, guys! Solid work. And remember, the full code is in the appendix, sitting there for you to dissect and torture as much as you like. Time to say goodbye. Stay sharp, stay bold, stay unstoppable 📈

PS: What would you do if you found a product with a drawdown of <1% and more than 1 year performance?

This is an invitation-only access to our QUANT COMMUNITY, so we verify numbers to avoid spammers and scammers. Feel free to join or decline at any time. Tap the WhatsApp icon below to join

Appendix

Full code

import numpy as np

import pandas as pd

import statsmodels.api as sm

from statsmodels.tsa.statespace.structural import UnobservedComponents

from statsmodels.regression.rolling import RollingOLS

from matplotlib.ticker import MaxNLocator

# Data

def generate_synthetic_market_data(n_samples: int = 1000) -> pd.DataFrame:

"""

Generates realistic log-price data exhibiting stochastic trend,

benchmark beta exposure, and pair cointegration.

"""

np.random.seed(42)

dates = pd.date_range(start="2020-01-01", periods=n_samples, freq="D")

benchmark_returns = np.random.normal(loc=0.0002, scale=0.01, size=n_samples)

benchmark_log_price = np.cumsum(benchmark_returns)

beta_true = 1.2

idiosyncratic_shocks = np.random.normal(loc=0.0, scale=0.015, size=n_samples)

idiosyncratic_shocks[400:450] += np.random.normal(loc=0.02, scale=0.05, size=50)

latent_trend = np.cumsum(

np.random.normal(loc=-0.0001, scale=0.005, size=n_samples)

)

asset1_log_price = (

beta_true * benchmark_log_price

+ latent_trend

+ idiosyncratic_shocks

)

equilibrium_error = np.zeros(n_samples)

for i in range(1, n_samples):

equilibrium_error[i] = (

0.85 * equilibrium_error[i - 1] + np.random.normal(0, 0.008)

)

asset2_log_price = asset1_log_price - 0.5 - equilibrium_error

df = pd.DataFrame(

{

"log_benchmark": benchmark_log_price,

"log_price": asset1_log_price,

"log_partner": asset2_log_price,

},

index=dates,)

return df

# Transformation algorithms

def state_space(price_series: pd.Series) -> pd.Series:

"""

Extracts the one-step-ahead forecast error from a local linear trend

state-space model.

"""

lp = price_series.rename("log_price")

model = UnobservedComponents(lp, level="local linear trend")

result = model.fit(disp=False)

space = pd.Series(

result.filter_results.forecasts_error[0],

index=lp.index,

name="state_space",)

space.iloc[0] = np.nan

return space

def rolling_regression_residual(

price_series: pd.Series,

benchmark_series: pd.Series,

window: int = 80,) -> pd.Series:

"""

Computes the dynamic rolling residual of the asset relative to the benchmark.

"""

y = price_series.rename("log_price")

x = benchmark_series.rename("log_benchmark")

X = sm.add_constant(x)

result = RollingOLS(y, X, window=window).fit()

params = result.params

fitted = params["const"] + params["log_benchmark"] * x

residual = (y - fitted).rename(f"rolling_regression_residual_{window}")

return residual

def cointegration_spread(

price_series: pd.Series,

partner_series: pd.Series,) -> pd.Series:

"""

Estimates the Engle-Granger equilibrium spread.

"""

y = price_series.rename("log_price")

x = partner_series.rename("log_partner")

X = sm.add_constant(x)

fit = sm.OLS(y, X, missing="drop").fit()

spread = (y - fit.predict(X)).rename("cointegration_spread")

return spread

def haar_detail_same_length(price_series: pd.Series, level: int = 2) -> pd.Series:

"""

Extracts the multiscale high-frequency detail coefficient using a discrete

Haar wavelet filter bank and returns it with the same length as the input.

"""

x = np.asarray(price_series, dtype=float)

n = len(x)

coeffs = x.copy()

current_len = n

detail = None

block = 1

for _ in range(level):

if current_len < 2:

break

used_len = current_len if current_len % 2 == 0 else current_len - 1

current = coeffs[:used_len]

avg = (current[0:used_len:2] + current[1:used_len:2]) / np.sqrt(2)

det = (current[0:used_len:2] - current[1:used_len:2]) / np.sqrt(2)

coeffs[: len(avg)] = avg

current_len = len(avg)

detail = det

block *= 2

if detail is None:

return pd.Series(

np.nan,

index=price_series.index,

name=f"haar_detail_L{level}",

)

upsampled = np.repeat(detail, block)

if len(upsampled) < n:

upsampled = np.r_[upsampled, np.repeat(upsampled[-1], n - len(upsampled))]

return pd.Series(

upsampled[:n],

index=price_series.index,

name=f"haar_detail_L{level}",)

def rolling_range_position(series: pd.Series, window: int = 60) -> pd.Series:

"""

Maps the series into [0, 1] based on the rolling min-max range.

"""

lo = series.rolling(window).min()

hi = series.rolling(window).max()

pos = (series - lo) / (hi - lo).replace(0, np.nan)

return pos.rename(f"range_position_{window}")