[QUANT LECTURE] Sufficient statistics and minimal signals

Market Inefficiencies - Information Theoretic Approach

Before you begin, remember that you have an index with the newsletter content organized by clicking on “Read the newsletter index” in this image.

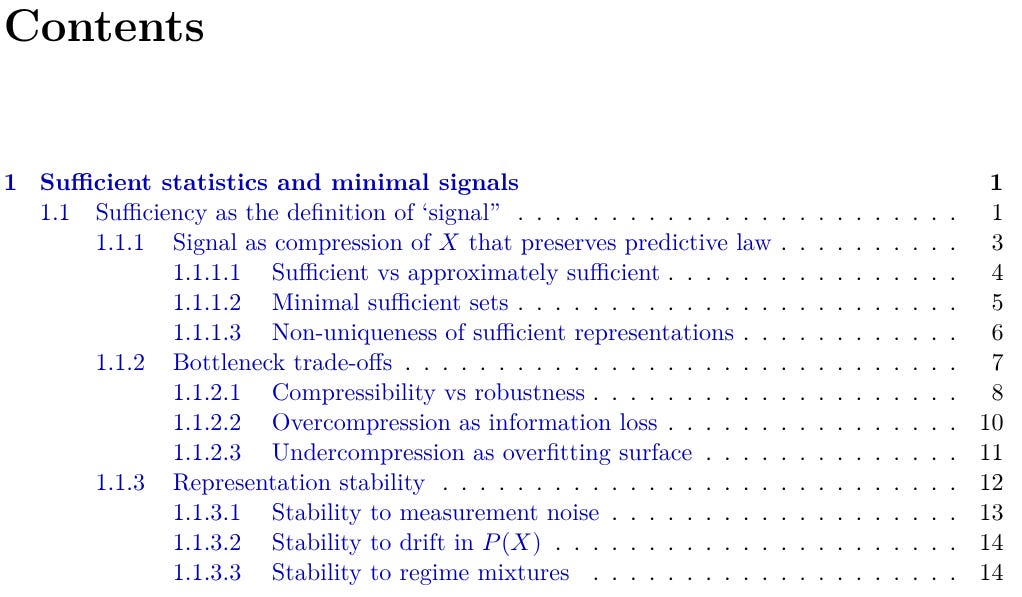

Sufficient statistics and minimal signals

This lecture defines a trading signal through sufficiency rather than through a rule, threshold, or pattern. A signal is a compression of the decision-time information set that preserves the conditional law of the declared outcome, so predictive meaning remains tied to the future law rather than to a single backtest metric. The lecture then develops minimality, bottleneck design, and representation stability as the conditions that make that compressed state usable under finite data, drift, and noise.

What’s inside:

Sufficiency as the definition of signal. A signal is a representation that preserves the conditional distribution of the outcome, which separates signal from strategy and makes feature relevance a property of conditional-law preservation rather than raw correlation.

Signal as compression with law preservation. Compression becomes signal construction when the reduced state carries the same predictive content as the full decision-time information set, which lets the system operate on a smaller and more stable representation without changing the law it conditions on.

Approximate sufficiency in practice. Exact sufficiency appears as the ideal case, while practical sufficiency is defined through a declared tolerance using divergence, proper-score regret, and the residual predictive content that remains outside the bottleneck.

Minimal sufficient sets. The lecture shows that a minimal signal is the smallest representation that still preserves the full conditional law, removes nuisance variation, lowers selection pressure, and keeps the signal portable across operators and model classes.

Non-uniqueness of sufficient representations. Many different representations can preserve the same predictive law, so the scientific question is whether preservation exists, while the engineering question is which representation remains most stable under noise, drift, and deployment constraints.

Bottleneck trade-offs. Bottleneck design becomes an information-budget problem that balances relevance against representational capacity, because too little compression increases variance and selection pressure, while too much compression removes predictive fragments the outcome still needs.

Overcompression and undercompression. The lecture explains that overcompression distorts the conditional law by blending distinct regimes, while undercompression creates a high-capacity overfitting surface where sharp in-sample structure often fails under recurrence and proper scoring.

Representation stability. A minimal signal must retain stable meaning under measurement noise, drift in state occupancy, and regime mixtures, so conditional beliefs, calibration, and divergence structure remain coherent when the signal moves from research into live deployment.