[QUANT LECTURE] Local predictability via divergence

Market Inefficiencies - Information Theoretic Approach

Before you begin, remember that you have an index with the newsletter content organized by clicking on “Read the newsletter index” in this image.

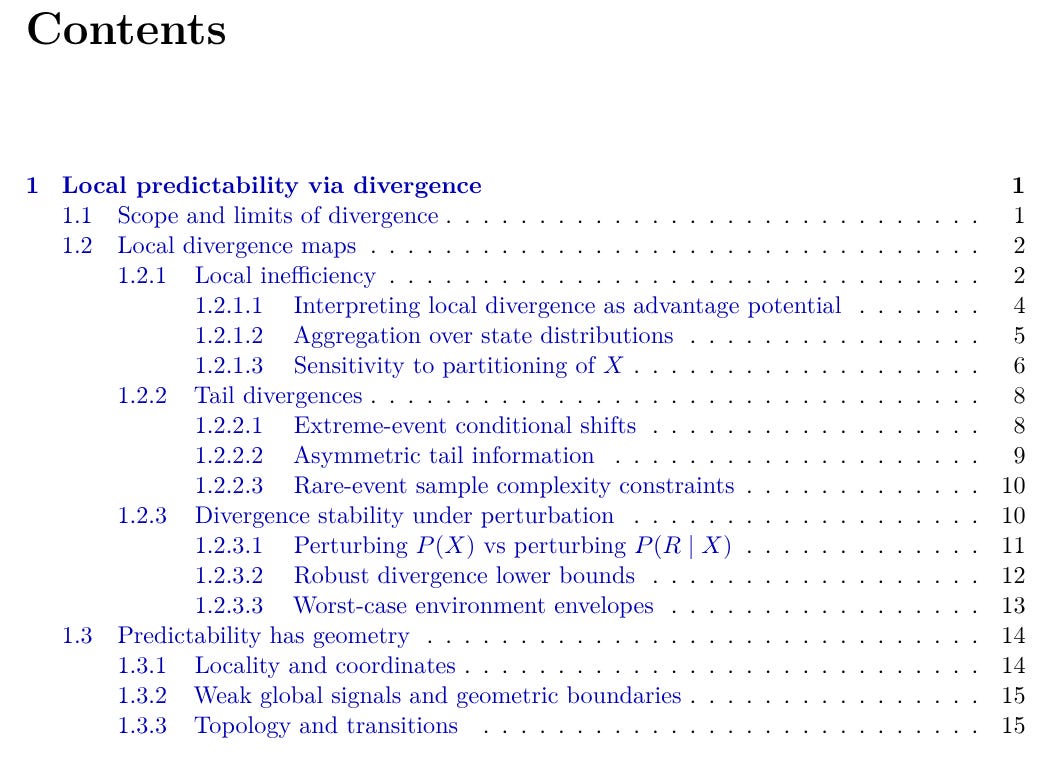

Local predictability via divergence

This chapter treats an inefficiency claim as a statement about distributional separation. Fix an outcome variable R and a horizon at which R is measured. The future becomes the probability law of R at decision time. The object of interest is the gap between the baseline future and the state-conditioned future, where X is a declared state variable. A divergence measures this gap by quantifying how much the predictive law of R changes when we move from “no state” to “state x,” and it keeps the research claim separate from the execution layer that turns structure into PnL.

What’s inside:

What divergence is for in trading research. Divergence serves as a dependence detector that lives in conditional laws, so the claim stays tied to how the future distribution changes across states.

Scope and limits of divergence. The chapter draws a boundary between divergence evidence and realized profit, and it frames the inefficiency claim inside a declared horizon, state region, and environment class.

Local divergence maps. The workflow treats R and X as design objects, then compares P(R|X≈x) to P(R) across state domains to build a field of separations over the state space.

Local inefficiency domains. A local inefficiency corresponds to a state domain that supports a stable statement across repeated visits, with an activation domain and a transition zone where the conditional law returns toward the baseline mixture.

Tail divergences. The chapter introduces tail-focused separation to target structure that lives inside extremes, where distributional shape changes matter most for constraint-relevant outcomes.

Divergence stability under perturbation. Evidence is checked under perturbations of the measurement lens (domain rules, estimation choices) and recomputed on held-out path segments to verify persistence.

Predictability has geometry. The chapter treats predictability as a geometric object with locality, boundaries, and transitions, so the research claim becomes measurable along the market’s movement through state regions.