[WITH CODE] Evaluation: Reengineer Machine Learning metrics

Is your strategy calibrated—or just lucky?

Table of contents:

Introduction.

Reimagining ML metrics for market dynamics.

The seven pillars of enhanced algorithmic trading.

Precision-recall trade-off or the profit-loss matrix.

ROC curves and the opportunity cost framework.

Matthews Correlation Coefficient as the balanced compass for alpha.

G-mean and balanced accuracy.

Cohen's Kappa for unmasking performance beyond pure chance.

Brier score and probabilistic calibration.

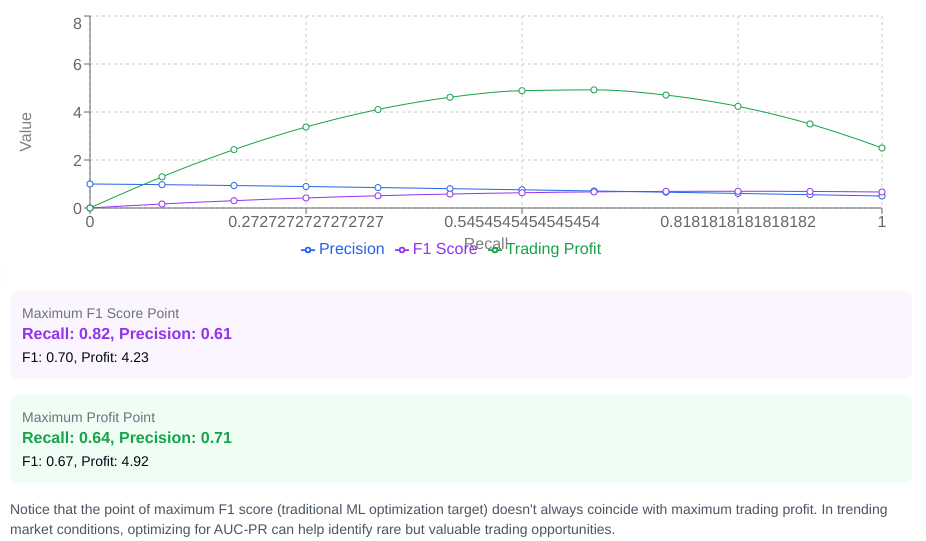

AUC-PR and imbalanced markets to find alpha in rare event.

Before you begin, remember that you have an index with the newsletter content organized by clicking on “Read full story” in this image.

Introduction

Today we operate at the intersection of two paradigms: the deterministic frameworks that form the backbone of quantitative finance and the adaptive pattern-recognition capabilities of machine learning. Traditional methodologies—refined through decades of rigorous statistical analysis, closed-form solutions, and stress-testing—provide interpretable models grounded in observable market relationships. Yet these approaches often falter when confronted with the non-stationary, reflexivity-driven nature of financial markets. The critical challenge lies in bridging the gap between the parsimony of analytical models and the often path-dependent dynamics of price.

Deploying machine learning in the markets introduces unique risks that demand rigorous scrutiny. Models trained on historical regimes frequently fail to adapt to structural breaks—black swan events, monetary policy shifts, or changes in market microstructure. Conventional performance metrics, such as accuracy scores or F1 measures, while useful for one approach may not be for another. Machine learning metrics are different from trading metrics and don't necessarily have the slightest correlation. But they can be very useful outside of machine learning with other approaches.

This divergence necessitates moving beyond classification metrics to focus on financial diagnostics: risk-adjusted returns, maximum drawdowns, regime-specific performance attribution, and robustness to stochastic volatility.

Reimagining ML metrics for market dynamics

Our path to this recalibration begins by recognizing the fundamental nature of the market: a place of profound asymmetry, where outcomes are rarely binary—success or failure—but rather exist on a spectrum of gains and losses. This is a world where the cost of being wrong often far outweighs the gains of being right, a truth that standard machine learning metrics optimized for symmetry largely ignore.

Trading systems, whether rules-based, analytical, or machine learning-based, must Trading systems, whether rules-based, analytical, or machine learning, must overcome all the hurdles that would break simpler algorithms designed for less adverse environments:

The underlying statistical properties of asset prices are not constant. Bull markets, bear markets, periods of high volatility, low volatility, crises, and calm phases each demand different trading logic. An algorithm tuned for one regime can be disastrously maladapted for another, rendering its historical "accuracy" moot.

Financial data is notoriously noisy. True predictive signals are often faint whispers lost in the roar of random price fluctuations, news headlines, and the collective irrationality of participants. The signal-to-noise ratio isn't a constant; it fluctuates, making consistent signal detection a monumental task.

Unlike classifying images or diagnosing medical conditions, the act of trading itself can influence the market. Large orders move prices. Successful strategies attract followers, diluting their effectiveness.

Profitable trading opportunities often exist within narrow temporal windows. A signal identified too late is useless. Evaluation metrics must implicitly or explicitly account for the timeliness of decisions.

The ultimate metric is risk-adjusted return, not just predictive accuracy. A model that predicts direction with 70% accuracy but loses more on its incorrect predictions than it gains on its correct ones is financially worthless. Our metrics must bridge this gap.

To master this environment, we must transcend the traditional interpretation of machine learning metrics. We must see them not just as indicators of statistical fit, but as proxies for financial outcomes.

You can check more about this here:

The seven pillars of enhanced algorithmic trading

Let's create a new foundation for a more effective evaluation framework by integrating machine learning metrics directly into the design and evaluation of algorithmic trading strategies. These seven pillars represent a technical synthesis that transforms abstract statistical measures into concrete tools.

Precision-recall trade-off or the profit-loss matrix

This is a zero-sum game and the cost of being wrong is often asymmetrical to the reward of being right. Standard precision and recall metrics, while useful, must be viewed through this lens of financial consequence. For a trading algorithm predicting a buy signal:

True positive: A predicted buy signal that results in a profitable trade. This is a successful capture of an opportunity.

False positive: A predicted buy signal that results in a losing trade. This is a direct capital loss.

True negative: No buy signal is predicted, and the market doesn't offer a profitable opportunity (or would result in a loss/flat trade). This is correctly avoiding a bad situation.

False negative: No buy signal is predicted, but the market did offer a profitable opportunity that was missed. This is an opportunity cost, a forgone gain.

Precision, defined as TP/(TP+FP), becomes the measure of the quality of our buy signals—what percentage of our predicted buys actually made money. Recall, TP/(TP+FN), measures the completeness—what percentage of available profitable opportunities did our system actually identify and attempt to capture?

Let’s code this to see an example:

import numpy as np

import pandas as pd

# Sample confusion matrix derived from trade outcomes

# Assuming a binary classification: Predict 1 for Buy, Actual 1 for Market Up (leading to profit)

# Predict 1 for Buy, Actual 0 for Market Down/Flat (leading to loss or flat)

# Predict 0 for No Trade, Actual 1 for Market Up (missed opportunity)

# Predict 0 for No Trade, Actual 0 for Market Down/Flat (correctly avoided)

conf_matrix_trades = {

'true_positives': 150, # Trades where system bought AND market went up (profitable)

'false_positives': 100, # Trades where system bought AND market went down/flat (losing)

'true_negatives': 200, # Periods where system didn't buy AND market went down/flat (avoided loss)

'false_negatives': 50 # Periods where system didn't buy AND market went up (missed profit)

}

# Calculate precision and recall based on trade outcomes

# Precision: Out of trades taken (TP+FP), how many were profitable (TP)?

precision = conf_matrix_trades['true_positives'] / (conf_matrix_trades['true_positives'] + conf_matrix_trades['false_positives'])

# Recall: Out of all profitable opportunities (TP+FN), how many did the system take (TP)?

recall = conf_matrix_trades['true_positives'] / (conf_matrix_trades['true_positives'] + conf_matrix_trades['false_negatives'])

print(f"Trade-based Precision: {precision:.4f}")

print(f"Trade-based Recall: {recall:.4f}")

# Now, introduce the financial asymmetry: average profit vs. average loss per trade

avg_profit_per_trade = 0.8 # Average percentage gain per successful trade

avg_loss_per_trade = -1.2 # Average percentage loss per unsuccessful trade

# Calculate the expected profit (or loss) across all trades taken

expected_profit_per_trade_instance = (conf_matrix_trades['true_positives'] * avg_profit_per_trade +

conf_matrix_trades['false_positives'] * avg_loss_per_trade)

print(f"Expected Profit from executed trades: {expected_profit_per_trade_instance:.2f}%")

# Profitability depends on the balance

# Total Profit = (TP * Avg_Profit) + (FP * Avg_Loss)

# For profitability, Total Profit > 0

# TP * Avg_Profit > - (FP * Avg_Loss)

# TP * Avg_Profit > FP * |Avg_Loss|

# Divide by (TP + FP):

# (TP / (TP + FP)) * Avg_Profit > (FP / (TP + FP)) * |Avg_Loss|

# Precision * Avg_Profit > (1 - Precision) * |Avg_Loss|

# Precision * Avg_Profit + Precision * |Avg_Loss| > |Avg_Loss|

# Precision * (Avg_Profit + |Avg_Loss|) > |Avg_Loss|

# Precision > |Avg_Loss| / (Avg_Profit + |Avg_Loss|)

# This gives us the critical "profitability threshold" for precision

profitability_threshold_precision = abs(avg_loss_per_trade) / (avg_profit_per_trade + abs(avg_loss_per_trade))

print(f"Minimum Precision required for profitability: {profitability_threshold_precision:.4f}")

# Compare actual precision to the threshold

if precision > profitability_threshold_precision:

print("Actual precision is above the profitability threshold.")

else:

print("Actual precision is below or at the profitability threshold. Likely unprofitable given these average outcomes.")Let’s visualize it:

The mathematics here is brutal and clear:

For the system to be profitable on the trades it takes, this value must be positive. This translates directly to a critical precision threshold:

Below this line in the sand, no amount of recall can save the strategy; it's simply picking too many losers relative to its winners, weighted by the average outcome magnitudes. This is the first perspective shift—moving from statistical correctness to the economic viability demanded by the market.

ROC curves and the opportunity cost framework

The ROC curve, typically a visualization of the tradeoff between True Positive Rate—Sensitivity/Recall—and False Positive Rate—Specificity—transforms into a mapping of financial opportunity costs. The standard curve helps choose a classification threshold by visualizing how TPR and FPR change. In trading, each point on this curve doesn't just represent a statistical balance; it represents a potential operational point for our algorithm, where a specific level of willingness to trigger trades—higher TPR—comes at the cost of accepting more bad signals—higher FPR.

Instead of optimizing for the point closest to [0,1] or maximizing the Area Under the Curve—known as AUC-ROC—which implicitly weights False Positives and False Negatives symmetrically, we must optimize for maximum financial outcome.

The mathematical relationship between the ROC curve and profitability at a given threshold t (which determines the specific TPR(t) and FPR(t)) is:

Assuming here that we only trade when the model gives a positive signal, and the 'negative' class corresponds to no trade or a short trade treated symmetrically but for simplicity focusing on long signals.

The optimal trading threshold t* is therefore:

This point rarely coincides with the traditional ML optimum. You can use the next snippet for that:

import numpy as np

import matplotlib.pyplot as plt

from sklearn.metrics import roc_curve

# Example data for True Labels (1 = Positive, 0 = Negative) and predicted scores

y_true = np.array([1, 0, 1, 1, 0, 0, 1, 1, 0, 0])

y_scores = np.array([0.9, 0.1, 0.8, 0.85, 0.2, 0.3, 0.95, 0.7, 0.05, 0.2])

# Define average profit and loss for trading

avg_profit = 10 # Example average profit for a true positive

avg_loss = 5 # Example average loss for a false positive

# Calculate ROC curve data

fpr, tpr, thresholds = roc_curve(y_true, y_scores)

# Calculate profit function for each threshold

profit = tpr * avg_profit - fpr * avg_loss

# Find optimal threshold based on maximizing profit

optimal_threshold_index = np.argmax(profit)

optimal_threshold = thresholds[optimal_threshold_index]

optimal_profit = profit[optimal_threshold_index]Let’s visualize this:

This perspective shift reveals that a trading system needs a different kind of calibration than a standard classifier. We are not just identifying a boundary; we are identifying a threshold that maximizes the expected return, directly incorporating the asymmetric costs of incorrect decisions.

Matthews Correlation Coefficient as the balanced compass for alpha

It is a metric often overlooked in simpler analyses, but it holds particular power for algorithmic trading because it provides a single, balanced measure of classification quality that accounts for all four quadrants of the confusion matrix (TP, TN, FP, FN), even with severe class imbalance.

MCC is defined as:

It ranges from -1—perfect inverse prediction—to +1—perfect prediction—with 0 indicating performance no better than random guessing. Why is this key for trading?

Profitable trading opportunities—the positive class—are often rare events in the market. A simple accuracy metric or even F1 score can be misleading in such imbalanced scenarios. MCC provides a more honest view of the algorithm's performance across both positive and negative cases, giving a better sense of its overall predictive skill, not just its ability to guess the majority class.

An MCC close to -1 is typically bad in standard classification. In trading, it could be gold. A consistent negative correlation might indicate a model that is perfectly wrong – and can be profitably traded in reverse. MCC helps explicitly identify such inverse edge.

A high MCC suggests the model's predictions are genuinely correlated with market movement outcomes in a way that considers both hits and misses on both sides. This provides a stronger indication of a real edge than metrics easily skewed by imbalance.

While the standard MCC is powerful, we can push it further. This snippet introduces a return-adjusted MCC concept:

def calculate_trading_mcc(predictions, actual_outcomes, trade_returns_for_positives, trade_returns_for_negatives):

"""

Calculate a Matthews Correlation Coefficient for trading, considering

actual trade outcomes and potentially return magnitudes.

Parameters:

predictions - binary predictions (e.g., 1 for Buy, 0 for No Trade)

actual_outcomes - binary actual results (e.g., 1 for Market Up/Profitable, 0 for Market Down/Flat/Loss)

This should align with what constitutes TP/FN for the prediction=1 case.

trade_returns_for_positives - List of returns for instances where actual_outcome is 1

trade_returns_for_negatives - List of returns for instances where actual_outcome is 0

"""

if len(predictions) != len(actual_outcomes):

raise ValueError("Predictions and actual outcomes must have the same length")

tp = sum((p == 1) and (a == 1) for p, a in zip(predictions, actual_outcomes))

fp = sum((p == 1) and (a == 0) for p, a in zip(predictions, actual_outcomes))

tn = sum((p == 0) and (a == 0) for p, a in zip(predictions, actual_outcomes))

fn = sum((p == 0) and (a == 1) for p, a in zip(predictions, actual_outcomes))

# Handle cases where denominator is zero

denominator = np.sqrt((tp + fp) * (tp + fn) * (tn + fp) * (tn + fn))

mcc_standard = (tp * tn - fp * fn) / denominator if denominator != 0 else 0.0

# A more complex return-weighted MCC could involve adjusting TP, FP counts

# by the magnitude of returns, or using a weighted sum in the numerator.

# The example in the prompt shows one interpretation - weighting the standard MCC

# by the ratio of summed TP returns to summed FP returns. Let's refine that concept.

# This is a non-standard MCC variant for illustration.

# Calculate sums of returns for trades taken based on prediction=1

total_tp_returns = sum(ret for p, a, ret in zip(predictions, actual_outcomes, trade_returns_for_positives + [0]*len(trade_returns_for_negatives)) if p == 1 and a == 1) # Simplified sum

total_fp_returns = sum(ret for p, a, ret in zip(predictions, actual_outcomes, [0]*len(trade_returns_for_positives) + trade_returns_for_negatives) if p == 1 and a == 0) # Simplified sum

# Avoid division by zero or zero loss scenario for weighting

if total_tp_returns > 0 and abs(total_fp_returns) > 0:

# Weight MCC by the profit/loss ratio from trades taken

mcc_return_weighted = mcc_standard * (total_tp_returns / abs(total_fp_returns))

else:

mcc_return_weighted = mcc_standard # No trades or no losses to weight by

return {

"mcc_standard": mcc_standard,

"mcc_return_weighted": mcc_return_weighted # A custom, non-standard variant

}

# Example data

predictions = [1, 1, 0, 1, 0] # Predicted buy/no trade actions

actual_outcomes = [1, 0, 1, 1, 0] # Actual market outcomes (1 = profitable, 0 = loss/flat)

trade_returns_for_positives = [0.05, 0.07, 0.03] # Returns from the trades where the market was profitable

trade_returns_for_negatives = [-0.02, -0.05] # Returns from the trades where the market was non-profitable

# Call the function

result = calculate_trading_mcc(predictions, actual_outcomes, trade_returns_for_positives, trade_returns_for_negatives)

# Output the results

print(f"Standard MCC: {result['mcc_standard']}")

print(f"Return-weighted MCC: {result['mcc_return_weighted']}")The power of MCC lies in its ability to summarize the confusion matrix into a single score that isn't distorted by class imbalance. A high MCC for a trading signal indicates a strong, balanced relationship between the signal and actual market outcomes, suggesting genuine predictive power beyond random chance or simply following a trend.

G-mean and balanced accuracy